Collaborative Robotics

For Nuclear Facilities

Introduction

I worked at Createc throughout my undergraduate degree and made meaningful contribution to a number of projects as a research engineer for UI/UX and software development.

My most notable work was on the project shown here, as well as another which involved designing and implementing a VR system in Unreal Engine that allowed users to move around digital twins of hazardous nuclear environments while receiving data about potential contamination around them in the form of surface heat maps, however this other work is not currently permitted to be shared outside of the company.

The team for this project was around 10 people, and we operated through fast-paced iteration and experimentation to underpin the companies goals as in R&D. Because of this, it was not usual for a Createc project to be so user-centered this early, but I saw an oppertunity to add value to the product by utilising the findings from my academic studies and attempting to integrate it into this new environment. I learned a lot from my time at the company, and I’m so thankful to everyone there who supported me and my work!

Project Context and Constraints

High-Level Goal:

Design the user journey through two different collaborative robotics systems that share some high-level function, then create one interface which satisfies both systems, and prototype it.

System 1: Uses a pair of robot arms to perform CT-scans of welds in-situ, with one mobile arm each side of the weld

System 2: Uses one robot arm hung from a gantry, to scan nuclear waste materials and produce heatmaps of contamination on a model of the object

Both systems needed to operate in safety-critical environments.

High-Level Constraints:

The front-end of the systems must run on a new, still in early development, operating system (OS), made by Createc Robotics. This OS came with a high number of limitations regarding the UI components/elements that could be used, for example there could be no moveable windows, and only supported buttons and sliders.

1. Problem Definition and Initial Strategy

The first step I took was to understand the goals of the systems so that I could clearly identify the top-level similarities and differences in their required flows. What was the theoretical problems these systems would solve, and what had the end customer already told us? I also looked at the functionality already planned by the back-end team.

Once I had a good understanding, I decided to use these similarities and differences to underpin a modular approach to the design. This had two motivations:

Modular phases of the user journeys and interface would mean the similar portions could be easily reused, but that the differing elements could be highly optimised for their special use-case

Since the main limitations of the front-end came from the on-going development of the new operating system (OS), having modularity meant that phases could be individually improved and refactored as the OS developed alongside my work.

2. User Research and Persona Creation

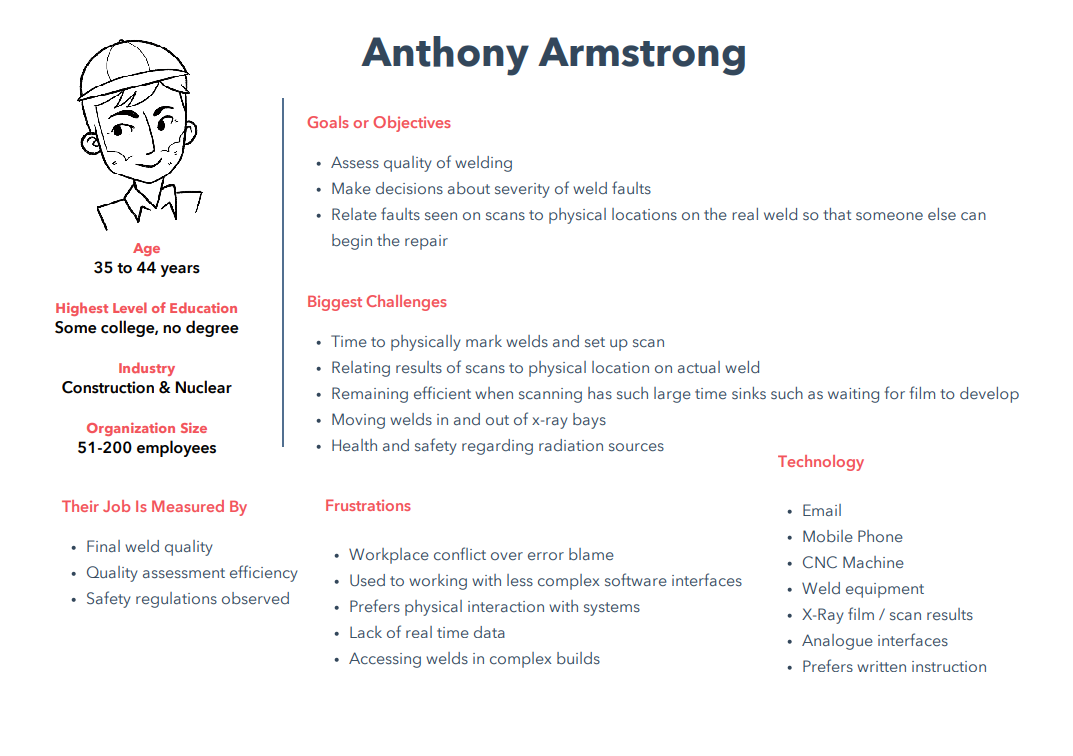

I conducted interviews with staff and managers at the deployment locations, and used these to inform a number of personas.

Importantly, I began to learn about the processes already in place and how these new projects could hope to solve some of the user frustrations. An interesting bias was identified here in the users - some seniors at the welding company had presumed that because existing machines in use consisted of large, tactile and analogue interfaces, that the new system should also be created this way, but when the operators were consulted, they didn’t state any preference for these, and in fact referred more to their personal, touch screen digital devices.

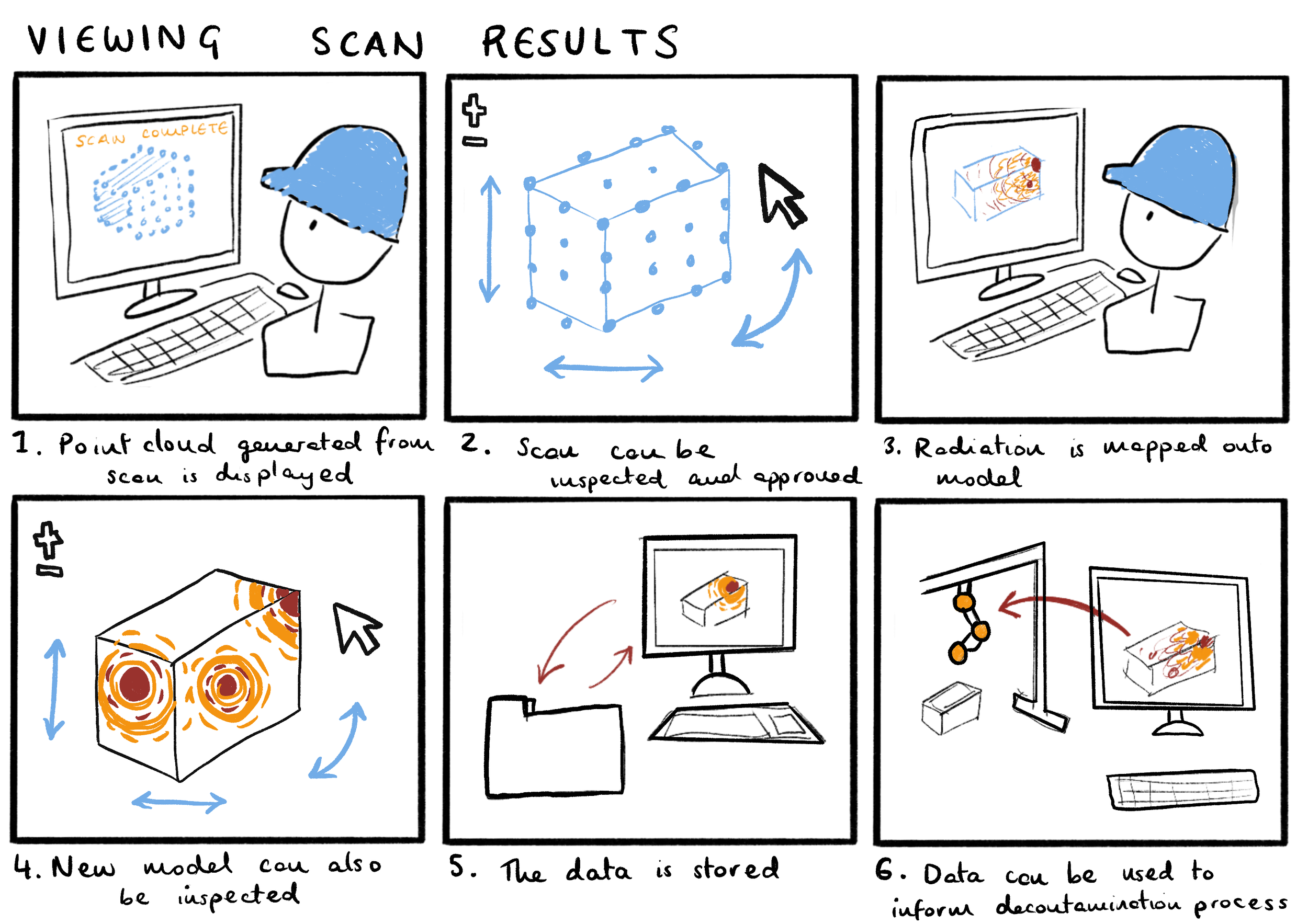

3. Storyboarding, Scenarios and Critical Analysis

One priority of this exercise was centred around the safety critical nature of the system, which meant it was crucial to instil confidence and a sense of closure in users to reduce anxiety, therefore it was important to understand which points in the flow which may generate the most stress.

Please see some examples of storyboards below:

4. Wireframing and Iteration with Stakeholders

After I understood the user-journey through the system and the priority of task and information, I began to wireframe the software. At the end of every major iteration, I would meet with internal stakeholders to gather feedback in the form of a think-aloud evaluation, using the interactive wireframes.

Unfortunately, I do not have access anymore to the interactive versions, but please see some static wireframes below from various stages of iteration:

After several iteractions of interactable wireframe prototypes, it was time to create a prototype in Unreal Engine. This was done to:

Provide the developers using Createc’s new Operating System a thorough model of requirements for their implementation

Prove the system usability when actually interfaced with the real-life robot arm

Collect further user feedback

Please see below for a mature, fully interactable prototype. As the model robot arm moves in this video, so does the robot in real-life.

Conclusion

The resulting work was demonstrated to customers and shareholder at the end of the project quarter, including my flyer designs.

Technologies and content included is property of Createc.